AI-generated manuscripts are becoming increasingly common in engineering journals. While AI can support scientific writing, it can also produce papers that appear valid but lack real technical substance.

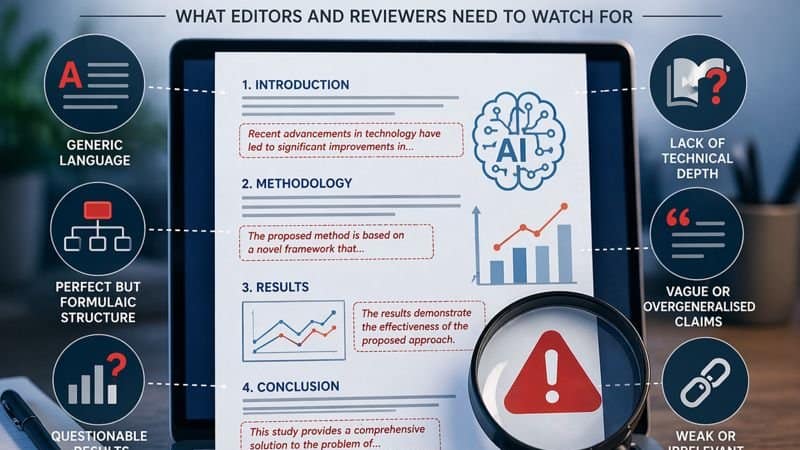

This article provides a practical framework for editors to identify red flags in AI-generated engineering submissions, focusing on linguistic patterns, structural signals, and technical inconsistencies.

What is changing in engineering submissions

Generative AI is now widely used in scientific writing. Used properly, it can improve clarity and efficiency. The problem appears when entire manuscripts are produced or heavily assisted without disclosure.

In engineering journals, this creates a new type of submission: papers that are grammatically correct, well-structured, and apparently rigorous, but lack real technical substance.

The editorial challenge is not to detect AI use itself, but to identify when AI has replaced actual scientific work.

Linguistic red flags

AI-generated text tends to be fluent but generic. It often lacks the natural variation and precision expected in technical writing.

Common signals include:

- Repetitive sentence structures across sections

- Overuse of neutral, polished language with no domain-specific nuance

- Generic transitions (“Moreover”, “In addition”, “It is worth noting that”)

- Vague claims without quantification

A typical example is a sentence like:

“The results demonstrate the effectiveness of the proposed method”

with no numerical evidence or comparison.

Another indicator is inconsistent terminology. The same concept may be referred to in multiple ways, suggesting automated generation rather than controlled technical writing.

Structural red flags

AI tools tend to produce manuscripts that look structurally perfect but feel artificial.

Typical patterns:

- Rigid IMRaD structure with unusually balanced sections

- Smooth transitions that lack logical depth

- Abstracts that read like compressed full papers

- Conclusions that overstate contributions

These papers often try to cover too many aspects superficially instead of developing a clear technical contribution.

Technical inconsistencies

This is where detection becomes more reliable.

Key issues include:

- Methods described in general terms without parameters or implementation details

- Mismatches between methods and results (e.g., different algorithms referenced)

- Unrealistic performance claims without proper benchmarks

- Lack of reproducibility (no code, no dataset, no configuration details)

In engineering, this is critical. A paper may sound correct but fail completely when evaluated at the level of implementation.

Figures, data, and results

AI-assisted manuscripts frequently fail in the results section.

Watch for:

- Overly clean or generic plots

- Missing error bars, variance, or uncertainty analysis

- Identical performance patterns across different experiments

- No negative results or limitations

Another common issue is inconsistency between figures and text. The manuscript may describe one experiment while the figure shows something else.

Citation and context issues

References in AI-generated manuscripts are often weak.

Typical signs:

- Citations that do not support the claims made

- Missing key references in the field

- Overly broad or irrelevant citation lists

Sometimes the references are real, but their integration into the argument is superficial.

Key editorial test

A simple question is often enough:

Can this work be reproduced based on the information provided?

If the answer is no, the problem is not language or formatting—it is lack of scientific validity.

What to do when you detect AI-generated patterns

Detection should be based on patterns, not isolated signals.

Recommended actions:

- Do not rely on AI detection tools alone

- Evaluate internal consistency across sections

- Request clarifications or raw data if needed

- Desk reject if methodological weaknesses are clear

Avoid focusing on whether AI was used. Focus on whether the manuscript meets scientific standards.

Final insight

AI-generated manuscripts are rarely wrong at the sentence level.

They fail at a deeper level:

- lack of technical depth

- lack of coherence

- lack of reproducibility

That is where editorial decisions should be made.